[I’m still waiting for Geoffrey Miller to OK the interview transcript, so it’s time for some math geekery.]

I visited a friend yesterday, and after we finished our first bottle of Merlot I brought up the Monty Hall problem, as one does. My friend confessed that she has never heard of the problem. Here’s how it goes:

You are faced with a choice of three doors. Behind one of them is a shiny pile of utilons, while the other two are hiding avocados that are just starting to get rotten and maybe you can convince yourself that they’re still OK to eat but you’ll immediately regret it if you try. The original formulation talks about a car and two goats, which always confused me because goats are better for racing than cars.

Anyway, you point to Door A, at which point the host of the show (yeah, it’s a show, you’re on TV) opens one of the other doors to reveal an almost-rotten avocado. The host knows what hides behind each door, always offers the switch, and never opens the one with the prize because that would make for boring TV.

You now have the option of sticking with Door A or switching to the third door that wasn’t opened. Should you switch?

After my friend figured out the answer she commented that:

- The correct answer is obvious.

- The problem is pretty boring.

- Monty Hall isn’t relevant to anything you would ever come across in real life.

Wrong, wrong, and wrong.

According to Wikipedia, only 13% of people figure out that switching doors improves your chance of winning from 1/3 to 2/3. But even more interesting is the fact that an incredible number of people remain unconvinced even after seeing several proofs, simulations, and demonstrations. The wrong answer, that the doors have an equal 1/2 chance of avocado, is so intuitively appealing that even educated people cannot overcome that intuition with mathematical reason.

I’ve written a lot recently about decoupling. At the heart of decoupling is the ability to override an intuitive System 1 answer with a System 2 answer that’s arrived at by applying logic and rules. This ability to override is what’s measured by the Cognitive Reflection Test, the standard test of rationality. Remarkably, many people fail to improve on the CRT even after taking it multiple times.

When rationalists talk about “me” and “my brain”, the former refers to their System 2 and the latter to their System 1 and their unconscious. “I wanted to get some work done, but my brain decided it needs to scroll through Twitter for an hour.” But in almost any other group, “my brain” is System 2, and what people identify with is their intuition.

Rationalists often underestimate the sheer inability of many people to take this first step towards rationality, of realizing that the first answer that pops into their heads could be wrong on reflection. I’ve talked to people who were utterly confused by the suggestion that their gut feelings may not correspond perfectly to facts. For someone who puts a lot of weight on System 2, it’s remarkable to see people for whom it may as well not exist.

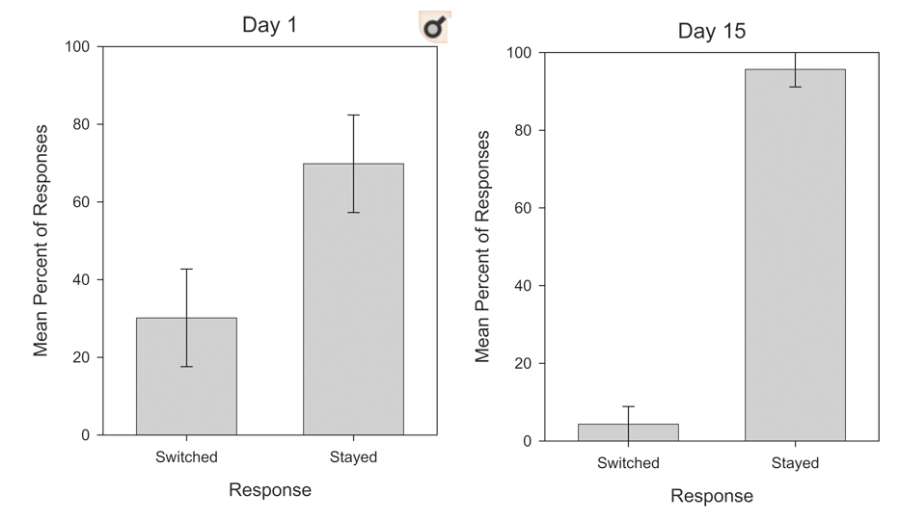

You know who does really well on the Monty Hall problem? Pigeons. At least, pigeons quickly learn to switch doors when the game is repeated multiple times over 30 days and they can observe that switching doors is twice as likely to yield the prize.

This isn’t some bias in favor of switching either, because when the condition is reversed so the prize is made to be twice as likely to appear behind the door that was originally chosen, the pigeons update against switching just as quickly:

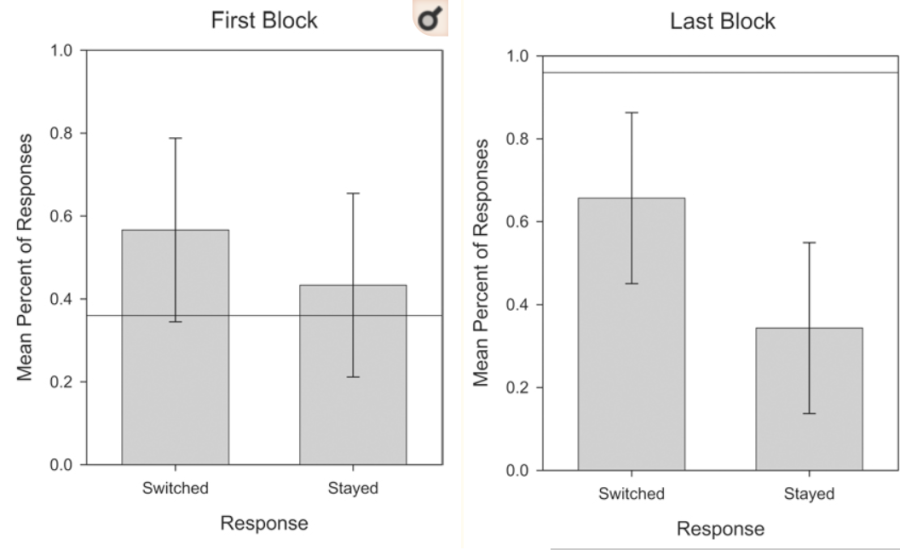

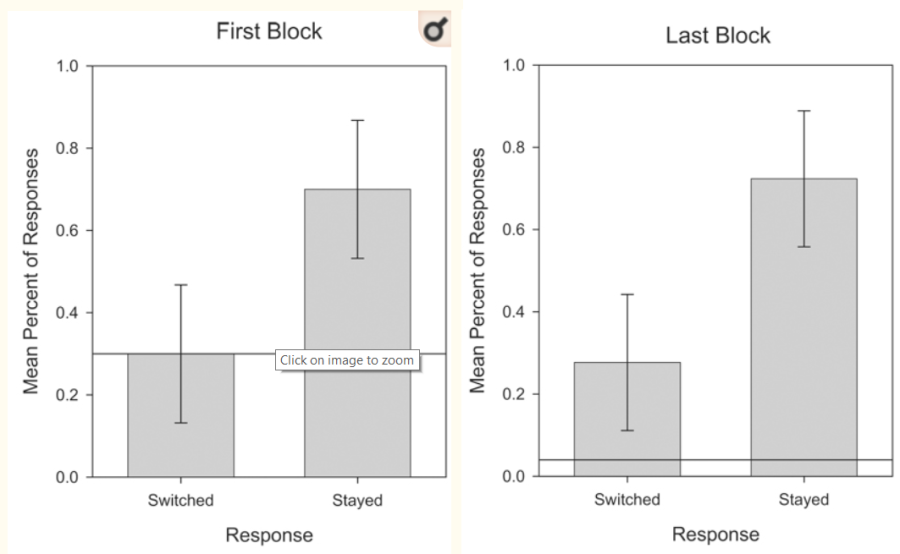

Remarkably, humans don’t update at all on the iterated game. When switching is better, a third of people refuse to switch no matter how long the game is repeated:

And when switching is worse, a third of humans keep switching:

I first saw this chart when my wife was giving a talk on pigeon cognition (I really married well). I immediately became curious about the exact sort of human who can yield a chart like that. The study these are taken from is titled Are Birds Smarter than Mathematicians?, but the respondents were not mathematicians at all.

Failing to improve one iota in the repeated game requires a particular sort of dysrationalia, where you’re so certain of your mathematical intuition that mounting evidence to the contrary only causes you to double down on your wrongness. Other studies have shown that people who don’t know math at all, like little kids, quickly update towards switching. The reluctance to switch can only come from an extreme Dunning-Kruger effect. These are people whose inability to do math is matched only by their certainty in their own mathematical skill.

This little screenshot tells you everything you need to know about psychology:

- The study had a sample size of 13, but I bet that the grant proposal still claimed that the power was 80%.

- The title misleadingly talked about mathematicians, even though not a single mathematician was harmed when performing the experiment.

- A big chunk of the psychology undergrads could neither figure out the optimal strategy nor learn it from dozens of repeated games.

Monty Hall is a cornerstone puzzle for rationality not just because it has an intuitive answer that’s wrong, but also because it demonstrates a key of Bayesian thinking: the importance of counterfactuals. Bayes’ law says that you must update not only on what actually happened, but also on what could have happened.

There are no counterfactuals relevant to Door A, because it never could have been opened. In the world where Door A contains the prize nothing happens to it that doesn’t happen in the world where it smells of avocado. That’s why observing that the host opens Door B doesn’t change the probability of Door A being a winner: it stays at 1/3.

But for Door C, you knew that there was a chance it would be opened, but it wasn’t. And the chance of Door C being opened depends on what it contains: 0% of being opened if it hides the prize, 75% if it’s the avocado. Even before calculating the numbers exactly, this tells you that observing the door that is opened should make you update the odds of Door C.

Contra my friend, Monty Hall-esque logic shows up in a lot of places if you know how to notice it.

Here’s an intuitive example: you enter a yearly performance review with your curmudgeonly boss who loves to point out your faults. She complains that you always submit your expense reports late, which annoys the HR team. Is this good or bad news?

It’s excellent news! Your boss is a lot more likely to complain about some minor detail if you’re doing great on everything else, like actually getting the work done with your team. The fact that she showed you a stinking avocado behind the “expense report timeliness” door means that the other doors are likely praiseworthy.

Another example – there are 9 French restaurants in town: Chez Arnaud, Chez Bernard, Chez Claude etc. You ask your friend who has tried them all which one is the best, and he informs you that it’s Chez Jacob. What does this mean for the other restaurants?

It means that the other eight restaurants are slightly worse than you initially thought. The fact that your friend could’ve picked any of them as the best but didn’t is evidence against them being great.

To put some numbers on it, your initial model could be that the nine restaurants are equally likely to occupy any percentile of quality among French restaurants in the country. If percentiles are confusing, imagine that any restaurant is equally likely to get any score of quality between 0 and 100. You’d expect any of the nine to be the 50th percentile restaurant, or to score 50/100, on average. Learning that Chez Jacob is the best means that you should expect it to score 90, since the maximum of N independent variables distributed uniformly over [0,1] has an expected value of N/N+1. You should update that the average of the eight remaining restaurants scores 45.

The shortcut to figuring that out is considering some probability or quantity that is conserved after you made your observation. In Monty Hall, the quantity that is conserved is the 2/3 chance that the prize is behind one of the doors B or C (because the chance of Door A is 1/3 and is independent of the observation that another door is opened). After Door B is opened, the chance of it containing the prize goes down by 1/3, from 1/3 to 0. This means that the chance of Door C should increase by 1/3, from 1/3 to 2/3.

Similarly, being told that Chez Jacob is the best upgraded it from 50 to 90, a jump of 40 percentiles or points. But your expectation that the average of all nine restaurants in town is 50 shouldn’t change, assuming that your friend was going to pick out one best restaurant regardless of the overall quality. Since Chez Jacob gained 40 points, the other eight restaurants have to lose 5 points each to keep the average the same. Thus, your posterior expectation for them went down from 50 to 45.

Here’s one more example of counterfactual-based thinking. It’s not parallel to Monty Hall at first glance, but the same Bayesian logic underpins both of them.

I’ve spoken to two women who write interesting things but are hesitant to post them online because people tell them they suck and call them $&#@s. They asked me how I deal with being called a $&#@ online. My answer is that I realized that most of the time when someone calls me a $&#@ online I should update not that I may be a $&#@, but only that I’m getting more famous.

There are two kinds of people who can possibly respond to something I’ve written by telling me I’m a $&#@:

- People who read all my stuff and are usually positive, but in this case thought I was a $&#@.

- People who go around calling others $&#@s online, and in this case just happened to click on Putanumonit.

In the first case, the counterfactual to being called a $&#@ is getting a compliment, which should make think that I $&#@ed up with that particular essay. When I get negative comments from regular readers, I update.

In the second case, the counterfactual to me being called a $&#@ is simply someone else being called a $&#@. My update is simply that my essay has gotten widely shared, which is great.

For example, here’s a comment that starts off with “Fuck you Aspie scum”. The comment has nothing to do with my actual post, I’m pretty sure that its author just copy-pastes it on rationalist blogs that he happens to come across. Given this, I found the comment to be a positive sign of the expanding reach of Putanumonit. It did nothing to make me worry that I’m an Aspie scum who should be fucked.

I find this sort of Bayesian what’s-the-counterfactual thinking immensely valuable and widely applicable. I think that it’s a teachable skill that anyone can improve on with practice, even if it comes easier to some than to others.

Only pigeons are born master Bayesians.

I really enjoyed the Parable of the French Restaurants.

Um. Also. In defense of my species… it looks to me like you pulled your charts from this paper? In which case, I think you pulled the wrong graphs for humans. Humans come out looking… less good than pigeons, but at least not insane. (I think your human charts are pulled from Figures 1 and 7, which are for pigeons; the analogous charts for humans are Figure 5.)

(I would also expect the humans to perform at levels closer to the pigeon ideal if they had been incentivized half so effectively as the pigeons were… but I realize that “how to incentivize college students” is one of psychology’s greatest open questions.)

LikeLike

$&#@ humans. I read the original study but then copied the charts from this story about the study, which of course used the wrong charts. Psychology research journalism is a hundred times more annoying than even the research itself.

I fixed the chart, thanks for the catch.

LikeLike

The graph is still wrong. Figure 5 are the human results for the inverse Monty Hall problem (2/3 chance of winning if you don’t switch). Figure 4 are for the regular Monty Hall variant. Your ridiculing of the psychology students is unfair and not supported by the results.

LikeLike

Aargh, I blame this stupid article and my own carelessness. I updated the post with the right charts to be a bit less snarky about the psych undergrads and a lot more snarky about the psych professors who did the study. Thanks for your diligence!

LikeLike

Shouldn’t ‘Chez Jacob’ be the tenth restaurant?

I was also quite surprised when I read that several people remain unconvinced of the correct solution.

For me, the most interesting part of this problem is that you can easily see that the people who get the wrong answer usually show that they are correctly solving a different problem (in this case just a new choice between two doors).

It made me realize that in most cases, people map a situation in completely different problems that they then solve to get an answer.

And how it is impossible for them to converge to a single answer, because they are talking about completely different things that share some similar aspects.

I did not like your examples.

In the first one there is nothing that limits her to complain about two things.

If she complained only about a minor detail, it means she did not complain about the others.

It is a good signal only if she likes to point all of your mistakes.

But it might be completely useless if she had a different personality, for example if she just had to complain about something and picked the simplest of the problems (as in: https://en.wikipedia.org/wiki/Law_of_triviality#Related_principles_and_formulations).

The second one resembles more the Monty Hall problem, but the information you obtain is not that useful.

It is like if the Monty Hall had 9 doors, and one was revealed.

Your chances increase if you change, but it is not as impactful as the original problem.

LikeLike

First – nice article! Reading the comments was fun (clever!) as it helped to temper the psychology criticisms! The Monty Hall is one of my favorites! In trying to get students to “get it” I’ve tried LOTS of different approaches. The one that worked best for me was formed out of the idea of framing. Rather than open a loser door straight away, I start by asking if they’d prefer to keep their pick, or, have both of the other doors. (Amazingly… or not, come to think of it – they may just not trust me – a few prefer to stick with the initial selection.) Usually this gets them to switch. Before proceeding, I ask if they are expecting a prize behind BOTH doors?! No, they realize one (at least) must be empty. Framing it as keeping one vs. switching to the rest seemed (in my experience) to help most students over the conceptual hurdle.

So, keeping with framing, have you heard of the Wason Selection task?

Four cards laid out (from the USA, so let’s go stereotypical): AK47. It is given that all card have a letter on one side and a number on the flip side. What is the fewest number of card that must be turned (and which) to test the rule “If there is an ‘A’ on one side, there must be a ‘4’ on the other”? Most say something like “2 cards must be turned, the ‘A’ card and the ‘4’ card.” (When it’s really the ‘A’ and the ‘7’ cards.)

As presented, this is a pretty abstract problem, HOWEVER, when the question is re-framed to a familiar context: “Four people are sitting at a bar drinking a beverage. As a manager, you need to verify that no underage people are drinking alcohol. Person (1) is drinking alcohol and you cannot unambiguously estimate their age; Person (2) is drinking soda and again, you cannot unambiguously estimate their age; Person (3) is clearly of legal drinking age, but you cannot tell what they are drinking; Person (4) is clearly underage, but you cannot tell what they are drinking either. How few (and which) customers must be bothered to show proof of age? In this case, few people have difficulty although it is structured the same as the AK47 problem. How a problem is phrased seems to affect performance. Not that this would elevate human cognition above pigeons’, though.

No need to respond; just thinking on my keyboard and you shouldn’t have to suffer too tremendously for it.

LikeLiked by 2 people

Uh oh, you’ve strayed within range of my favorite pigeon-intelligence-related fun fact, which I will take any excuse to share!

During WWII the US hired B.F. Skinner to develop a pigeon-guided bomb.

https://en.wikipedia.org/wiki/Project_Pigeon

LikeLiked by 1 person

Or else people are regular readers and usually like your work but only bother comment when they have some nit to pick? I…may fall into that category, I’m sorry to say. Which is probably something I should change but that’s a different question.

LikeLike

Thanks for the article. It was a nice introduction to this technique for reasoning/updating probabilities. I’m going to try to practice this more often. A nitpick about some of the reasoning for Monte Hall:

Wikipedia has a contrived example to show that this reasoning isn’t valid, i.e. that even though Door A can never be opened, the probability that Door A is a winner can change. In short, imagine that Monty always reveals the rightmost (alphabetically last) door with a hidden avocado to reveal. If you choose Door A and Monty reveals Door C, then the probability of Door A containing the avocado updates to 1/2, even though Door A could never have been opened.

So, the point is that the fact that Door A is never acted on directly does not mean it cannot be impacted by new knowledge. I’m not trying to say that your conclusions are wrong because of this contrived counterexample, just that the reasoning process is slightly off, and it seemed like the process was more the central point of the article than the actual Monty Hall Problem. People like me who are just learning this stuff might generalize the wrong rules for counterfactual reasoning.

Thanks again for the article, and sorry for the nitpick!

LikeLiked by 1 person

I’m reluctant to assume I know what makes boring TV.

LikeLike

Your understanding of the pigeon paper seems wrong. The humans aren’t actually told it’s a Monty Hall problem – “In particular, no story was presented”. People aren’t under-switching because of their incorrect intuitions about the problem, it’s probably because they don’t care about performing well on the task. AFAICT their only reward is “you get points and you should pretend points are good”.

When humans are actually given relevant incentives they seem to do comparably well as pigeons, see https://link.springer.com/article/10.1023/A:1026209001464 which shows pretty sustained improvement over time. It doesn’t go to as many total periods as the pigeon study, but it seems plausible to extrapolate that the line would continue up to match pigeons.

LikeLike