Rats v. Plague

The Rationality community was never particularly focused on medicine or epidemiology. And yet, we basically got everything about COVID-19 right and did so months ahead of the majority of government officials, journalists, and supposed experts.

We started discussing the virus and raising the alarm in private back in January. By late February, as American health officials were almost unanimously downplaying the threat, we wrote posts on taking the disease seriously, buying masks, and preparing for quarantine.

Throughout March, the CDC was telling people not to wear masks and not to get tested unless displaying symptoms. At the same time, Rationalists were already covering every relevant angle, from asymptomatic transmission to the effect of viral load, to the credibility of the CDC itself. As despair and confusion reigned everywhere into the summer, Rationalists built online dashboards modeling nationwide responses and personal activity risk to let both governments and individuals make informed decisions.

This remarkable success did not go unnoticed. Before he threatened to doxx Scott Alexander and triggered a shitstorm, New York Times reporter Cade Metz interviewed me and other Rationalists mostly about how we were ahead of the curve on COVID and what others can learn from us. I told him that Rationality has a simple message: “people can use explicit reason to figure things out, but they rarely do”

Rationalists have been working to promote the application of explicit reason, to “raise the sanity waterline” as it were, but with limited success. I wrote recently about success stories of rationalist improvement but I don’t think it inspired a rush to LessWrong. This post is in a way a response to my previous one. It’s about the obstacles preventing people from training and succeeding in the use of explicit reason, impediments I faced myself and saw others stumble over or turn back from. This post is a lot less sanguine about the sanity waterline’s prospects.

The Path

I recently chatted with Spencer Greenberg about teaching rationality. Spencer regularly publishes articles like 7 questions for deciding whether to trust your gut or 3 types of binary thinking you fall for. Reading him, you’d think that the main obstacle to pure reason ruling the land is lack of intellectual listicles on ways to overcome bias.

But we’ve been developing written and in-person curricula for improving your ability to reason for more than a decade. Spencer’s work is contributing to those curricula, an important task. And yet, I don’t think that people’s main failure point is in procuring educational material.

I think that people don’t want to use explicit reason. And if they want to, they fail. And if they start succeeding, they’re punished. And if they push on, they get scared. And if they gather their courage, they hurt themselves. And if they make it to the other side, their lives enriched and empowered by reason, they will forget the hard path they walked and will wonder incredulously why everyone else doesn’t try using reason for themselves.

This post is about that path.

Alternatives to Reason

What do I mean by explicit reason? I don’t refer merely to “System 2”, the brain’s slow, sequential, analytical, fully conscious, and effortful mode of cognition. I refer to the informed application of this type of thinking. Gathering data with real effort to find out, crunching the numbers with a grasp of the math, modeling the world with testable predictions, reflection on your thinking with an awareness of biases. Reason requires good inputs and a lot of effort.

The two main alternatives to explicit reason are intuition and social cognition.

Intuition, sometimes referred to as “System 1”, is the way your brain produces fast and automatic answers that you can’t explain. It’s how you catch a ball in flight, or get a person’s “vibe”. It’s how you tell at a glance the average length of the lines in the picture below but not the sum of their lengths. It’s what makes you fall for the laundry list of heuristics and biases that were the focus of LessWrong Rationality in the early days. Our intuition is shaped mostly by evolution and early childhood experiences.

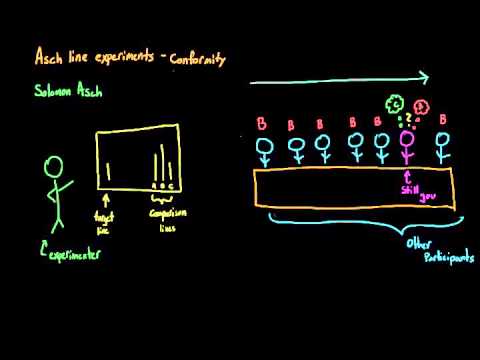

Social cognition is the set of ideas, beliefs, and behaviors we employ to fit into, gain status in, or signal to groups of people. It’s often intuitive, but it also makes you ignore your intuition about line lengths and follow the crowd in conformity experiments. It’s often unconscious — the memes a person believes (or believes that they believe) for political expediency often just seem unquestionably true from the inside, even as they change and flow with the tides of group opinion.

Social cognition has been the main focus of Rationality in recent years, especially since the publication of The Elephant in the Brain. Social cognition is shaped by the people around you, the media you consume (especially when consumed with other people), the prevailing norms.

Rationalists got COVID right by using explicit reason. We thought probabilistically, and so took the pandemic seriously when it was merely possible, not yet certain. We did the math on exponential growth. We read research papers ourselves, trusting that science is a matter of legible knowledge and not the secret language of elevated experts in lab coats. We noticed that what is fashionable to say about COVID doesn’t track well with what is useful to model and predict COVID.

On February 28th, famous nudger Cass Sunstein told everyone that the reason they’re “more scared about COVID than they have any reason to be” is the cognitive bias of probability neglect. He talked at length about university experiments with electric shocks and gambles, but neglected to calculate any actual probabilities regarding COVID.

While Sunstein was talking about the failures of intuition, he failed entirely due to social cognition. When the article was written, prepping for COVID was associated with low-status China-hating reactionaries. The social role of progressive academics writing in progressive media was to mock them, and the good professor obliged. In February people like Sunstein mocked people for worrying about COVID in general, in March they mocked them for buying masks, in April they mocked them for hydroxychloroquine, in May for going to the beach, in June for not wearing masks. When someone’s view of COVID is shaped mostly by how their tribe mocks the outgroup, that’s social cognition.

Underperformance Swamp

The reason that intuition and social cognition are so commonly relied on is that they often work. Doing simply what feels right is usually good enough in every domain you either trained for (like playing basketball) or evolved for (like recoiling from snakes). Doing what is normal and fashionable among your peers is good enough in every domain your culture has mastered over time (like cooking techniques). It’s certainly good for your own social standing, which is often the main thing you care about.

Explicit rationality outperformed both on COVID because responding to a pandemic in the information age is a very unusual case. It’s novel and complex, long on available data and short on trustworthy analysis, abutting on many spheres of life without being adequately addressed by any one of them. In most other areas reason does not have such an inherent advantage.

Many Rationalists have a background in one of the few other domains where explicit reason outperforms, such as engineering or the exact sciences. This gives them some training in its application, training that most people lack. Schools keep talking about imparting “critical thinking skills” to all students but can scarcely point to much success. One wonders if they’re really motivated to try — will a teacher really have an easier time with 30 individual critical thinkers rather than a class of password-memorizers?

Then there’s the fact that most people engaged enough to answer a LessWrong survey score in the top percentile on IQ tests and the SAT. Quibble as you may with those tests, insofar as they measure anything at all they measure the ability to solve problems using explicit reason. And that ability varies very widely among people.

And so most people who are newly inspired to solve their problems with explicit reason fail. Doubly so since most problems people are motivated to solve are complicated and intractable to System 2 alone: making friends, losing weight, building careers, improving mental health, getting laid. And so the first step on the path to rationality is dealing with rationality’s initial failure to outperform the alternatives.

Sinkholes of Sneer

Whether someone gives up after their initial failure or perseveres to try again depends on many factors: their personality, context, social encouragement or discouragement. And society tends to be discouraging of people trying to reason things out for themselves.

As Zvi wrote, applying reason to a problem, even a simple thing such as doing more of what is already working, is an implicit accusation against everyone who didn’t try it. The mere attempt implies that you think those around you were too dumb to see a solution that required no gifts or revelations from higher authority, but mere thought.

The loudest sneers of discouragement come from those who tried reason for themselves, and failed, and gave up, and declared publicly that “reason” is a futile pursuit. Anyone who succeeds where they failed indicts not merely their intelligence but their courage.

Many years ago, Eliezer wrote about trying the Shangri-La diet, a strange method based on a novel theory of metabolic “set points” and flavor-calorie dissociation. Many previous casualties of fad diets scoffed at this attempt not because they spotted a clear flaw in the Shangri-La theory, but at Eliezer’s mere hubris at trying to outsmart dieting and lose weight without applying willpower.

Oh, you think you’re so much smarter? Well let me tell you…

A person who is just starting (and mostly failing) to apply explicit reason doesn’t have confidence in their ability, and is very vulnerable to social pressure. The are likely to persevere only in a “safe space” where attempting rationality is strongly endorsed and everything else is devalued. In most normal communities the social pressure against it is simply too strong.

This is I think is the main purpose of LessWrong and the Rationalist community, and similar clubs throughout history and around the world. To outsiders it looks like a bunch of aspie nerds who severely undervalue tact, tradition, intuition, and politeness, building an awkward and exclusionary “ask culture“. They’re not entirely wrong. These norms are too skewed in favor of explicit reason to be ideal, and mature rationalists eventually shift to more “normie” norms with their friends. But the nerd norms are just skewed enough to push the aspiring rationalist to practice the craft of explicit reason, like a martial arts dojo.

Strange Status and Scary Memes

But not all is smooth sailing in the dojo, and the young rationalist must navigate strange status hierarchies and bewildering memeplexes. I’ve seen many people bounce off the Rationalist community over those two things.

On the status front, the rightful caliph of rationalists is Eliezer Yudkowsky, widely perceived outside the community to be brash, arrogant, and lacking charisma. Despite the fact of his caliphdom, arguing publicly with Eliezer is one of highest-status things a rationalist can do, while merely citing him as an authority is disrespected.

People like Scott Alexander or Gwern Branwen are likewise admired despite many people not even knowing what they look like. Attributes that form the basis of many status hierarchies are heavily discounted: wealth, social grace, credentials, beauty, number of personal friends, physical shape, humor, adherence to a particular ideology. Instead, respect often flows from disreputable hobbies such as blogging.

I think that people often don’t realize that their discomfort with rationalists comes down to this. Every person cares deeply and instinctively about respect and their standing in a community. They are distressed by status hierarchies they don’t know how to navigate.

And if that wasn’t enough, rationalists believe some really strange things. The sentence “AI may kill all humans in the next decade, but we could live forever if we outsmart it — or freeze our brains” is enough to send most people packing.

But even less outlandish ideas cause trouble. The creator of rationality’s most famous infohazard observed that any idea can be an infohazard to someone who derives utility or status from lying about it. Any idea can be hazardous to to someone who lacks a solid epistemology to integrate it with.

In June a young woman filled out my hangout form, curious to learn more about rationality. She’s bright, scrupulously honest, and takes ideas very seriously, motivated to figure out how the world really works so that she can make it better. We spent hours and hours discussing every topic under the sun. I really liked her, and saw much to admire.

And then, three months later, she told me that she doesn’t want to spend time with me or any rationalists anymore because she picked up from us beliefs that cause her serious distress and anxiety.

This made me very sad also perplexed, since the specific ideas she mentioned seem quite benign to me. One is that IQ is real, in the sense that people differ in cognitive potential in a way that is hard to change as adults and that affects their potential to succeed in certain fields.

Another is that most discourse in politics and the culture war can be better understood as signaling, a way for people to gain acceptance and status in various tribes, than as behavior directly driven by an ideology. Hypocrisy is not an unusually damning charge, but the human default.

To me, these beliefs are entirely compatible with a normal life, a normal job, a wife, two guinea pigs, and many non-rationalist friends. At most, they make me stay away from pursuing cutting-edge academic mathematics (since I’m not smart enough) and from engaging political flame wars on Facebook (since I’m smart enough). Most rationalist believe these to some extent, and we don’t find it particularly remarkable.

But my friend found these ideas destabilizing to her self-esteem, her conception of her friends and communities, even her basic values. It’s as if they knocked out the ideological scaffolding of her personal life and replaced it with something strange and unreliable and ominous. I worried that my friend shot right past the long path of rationality and into the valley of disintegration.

Valley of Disintegration

It has been observed that some young people appear to get worse at living and at thinking straight soon after learning about rationality, biases, etc. We call it the valley of bad rationality.

I think that the root cause of this downturn is people losing touch entirely with their intuition and social cognition, replaced by trying to make or justify every single decision with explicit reasoning. This may come from being overconfident in one’s reasoning ability after a few early successes, or by anger at all the unreasoned dogma and superstition one has to unlearn.

A common symptom of the valley are bucket errors, when beliefs that don’t necessarily imply one another are entangled together. Bucket errors can cause extreme distress or make you flinch away from entire topics to protect yourself. I think this may have happened to my young friend.

My friend valued her job, and her politically progressive friends, and people in general, and making the world a better place. These may have become entangled, for example by thinking that she values her friends because their political activism is rapidly improving the world, or that she cares about people in general because they each have the potential to save the planet if they worked hard. Coming face to face with the ideas of innate ability and politics-as-signaling while holding on to these bucket errors could have resulted in a sense that her job is useless, that most people are useless, and that her friends are evil. Since those things are unthinkable, she flinched away.

Of course, one can find good explicit reasons to work hard at your job, socialize with your friends, and value each human as an individual, reasons that have little to do with grand scale world-improvement. But while this is useful to think about, it often just ends up pushing bucket errors into other dark corners of your epistemology.

People just like their friends. It simply feels right. It’s what everyone does. The way out of the valley is to not to reject this impulse for lack of journal citations but to integrate your deep and sophisticated friend-liking mental machinery with your explicit rationality and everything else.

The way to progress in rationality is not to use explicit reason to brute-force every problem but to use it to integrate all of your mental faculties: intuition, social cognition, language sense, embodied cognition, trusted authorities, visual processing… The place to start is with the ways of thinking that served you well before you stumbled onto a rationalist blog or some other gateway into a method and community of explicit reasoners.

This idea commonly goes by metarationality, although it’s certainly present in the original Sequences as well. It’s a good description for what the Center for Applied Rationality teaches — here’s an excellent post by one of CFAR’s founders about the valley and the (meta)rational way out.

Metarationality is a topic for more than two paragraphs, perhaps for an entire lifetime. I have risen out of the valley — my life is demonstrably better than before I discovered LessWrong — and the metarationalist climb is the path I see ahead of me.

And behind me, I see all of this.

So what to make of this tortuous path? If you’re reading this you are quite likely already on it, trying to figure out how to figure things out and dealing with the obstacles and frustrations. If you’re set on the goal that this post may offer some advice to help you on your way: try again after the early failures, ignore the sneers, find a community with good norms, and don’t let the memes scare you — it all adds up to normalcy in the end. Let reason be the instrument that sharpens your other instruments, not the only tool in your arsenal.

But the difficulty of the way is mostly one of motivation, not lack of instruction. Someone not inspired to rationality won’t become so by reading about the discouragement along the way.

And that’s OK.

People’s distaste for explicit reason is not a modern invention, and yet our species is doing OK and getting along. If the average person uses explicit reason only 1% of the time, the metarationalist learns that she may up that number to 3% or 5%, not 90%. Rationality doesn’t make one a member of a different species, or superior at all tasks.

The rationalists pwned COVID, and this may certainly inspire a few people to join the tribe. As for everyone else, it’s fine if this success merely raises our public stature a tiny bit, lets people see that weirdos obsessed with explicit reason have something to contribute. Hopefully it will make folk slightly more likely to listen to the next nerd trying to tell them something using words like “likelihood ratio” and “countersignaling”.

Because if you think that COVID was really scary and our society dealt with it really poorly — boy, have we got some more things to tell you.

“Instead, respect often flows from disreputable hobbies such as blogging.”

Yuck

Slightly disagree here. I think the “bucket error” explanation is most of it. For the rest, it’s a mismatch between the expected usefulness of System 1 thinking and the actual usefulness. Experienced rationalists know roughly how much value they’ll get from System 1 (e.g. very high value for COVID, very low value for a bar fight). New learners are very poor at that.

LikeLike

I don’t think you’ve supported the premise of your argument, that Rationalists “got COVID right”, strongly enough. Linking to a couple posts that discuss things that ultimately turned out to be correct is pretty weak evidence for the strength of this claim.

Without stronger substantiation of the underlying claim, the rest of this article falls apart.

LikeLike

This is very much an ‘insider’ story so it’s reasonable for you to be skeptical if you don’t know more of the details already.

(Knowing that the “underlying claim” is ‘true’ is very much a meta-rational ‘achievement’, especially given how nebulous/ambiguous the claim is itself.)

LikeLike

Are you able to link to a couple of examples of rationalists getting it right? It fits with what I’ve seen–a couple of people I know to be involved with rationalism were going on about it back in January–but you may have more examples to substantiate your claim.

LikeLike

I can’t think of any ‘slam dunks’ – that are also neatly packaged into something small and self-contained to which I could point someone.

Another problem with the evidence of rationalists getting it right is that it’s easy to dispute that they did get it right by anyone insufficiently sympathetic or with a large enough gap in common beliefs. That’s one of the general costs of rationality (or reasoning, or even thinking) – you might end up believing something different than other people (‘social cognition’ beliefs). Reaching those conclusions is one thing; convincing or persuading other people of them is much, much harder.

One example that’s helpfully not-very-political is the ‘use LOTS of light that mimics the sun’ as a treatment for SAD (seasonal affective disorder). I’m pretty sure I suffer from SAD and using a very bright sun-like-lamp has seemed to help me a lot. But I don’t think there’s a big enough body of scientific literature by which you could convince most people of this.

And that leads to another big pit of woe – the tawdry reality of science as a social (and epistemic) phenomena, and the limits of science implied by that reality. I think it’s an extremely common belief among ‘rationalists’ (and rationalist-adjacents) that some of the ‘scientific consensus’ is wrong and some true facts are not also a scientific consensus.

To me, with respect to COVID-19, the ‘rationalists’ got it right by thinking about the (at-the-time possible) pandemic at all. ‘Social cognition’ feels like (and is) thinking, but the kind of reasoning implied by ‘rationality’ is a whole different (and much more laborious) activity. That we do it at all is ‘getting it right’ – not because we’re going to win every time; more that you can’t win (in this way) if you don’t play at all.

It also turned out that we were entirely reasonable to worry about a COVID-19 pandemic. So we’ve got that going for us.

Arguably AI x-risk is another thing we got it right – it’s at least discussed seriously and openly by ‘mainstream’ people (e.g. celebrities).

I’ll try to think of any other possible ‘slam dunks’ and others might have good candidates to suggest as well.

LikeLike

I’m constantly torn between hope and disappointment in rationality. This post feels like a much needed observation of common failure modes and one of your best submissions – and yet, despite all the clarifications it provides, it seems to miss something crucial and yucky about rationalist spheres.

Maybe there’s not much to rationality beyond high IQ and the introductory content, with the “advanced training” not being worth the efforts? Maybe it conditions you to use too much cognitive resources on a daily basis, so you’re more tired and less “agenty” when you should rely on intuition, social cognition or social skills? Maybe the rationalist’s advantage is so small you can’t really detect it while things in your life go well (or fall apart) due to external circumstances? Or maybe rationality makes you acutely aware of the pressing problems you can’t solve, so it shows you a true but very distressing part of reality? I don’t know.

Overall, this previous comment seems to be particularly relevant:

https://putanumonit.com/2019/12/08/rationalist-self-improvement/#comment-49478

LikeLike

Rationality, and especially the “advanced training”, is definitely not worth the effort – for most people. (I took that as a big point of this post.)

But some very awesome events were only possible because of it – and not some pure undiluted perfect manifestation either, but just enough.

It is necessary for certain things, but almost never sufficient on its own.

I’m a software person (programmer) so it’s a big source of metaphors for me, but I (now) think of one of the problems of ‘The Path to Reason’ as being primarily equivalent to ‘inheriting legacy code’, i.e. having to work with code that someone wrote ‘long ago’ (e.g. years prior, or maybe even as late as a couple of weeks past). Except in the case of rationality, ‘pre-rational’ thinking is at least familiar!

And the biggest trap with inheriting legacy code – a veritable pit into which people knowingly and enthusiastically fling themselves and others – is rewriting too much of the code. The worst cases being those where people to decide to rewrite all of the code at once.

Note tho that it is (strictly) possible to do this successfully. It is, sadly, tho, extremely rare. (And besides the technical achievement of ‘successfully’ rewriting some code, there are almost always a plethora of other avenues by which the encompassing project can still fail.)

But, in general, the far better way to rewrite code is not en-masse, but by ‘refactoring’ it (and, ideally, by creating a very large number of automated tests by which you can hopefully gain enough confidence that the new code works like the old).

And I think that’s what anyone that pursues rationality realizes – after falling into these traps a few times (at least).

And it’s not like the point hasn’t been made, but it’s another thing again to ‘integrate’ it into everything else.

This is all also complicated by the ongoing and inevitable fact that most people are not rational and are, mostly, engaging in social cognition. Or, rather, all of the not-quite rational but eminently reasonable thinking people do, as well as lots of mostly-private rational thinking people do, is mostly invisible to others and thus mostly ignored in general. But the most visible thinking is either intuition or social cognition. And they’re both very much immune (tho not perfectly) to rational arguments.

So – even if you, personally, think rationally and either update your beliefs or act more effectively – you can be penalized for expressing your new more accurate beliefs or allowing others to see you act differently from them. You must, in many cases, act strategically to benefit from rationality. (This isn’t just true for rationality either tho.)

“The rationalist’s advantage” can, and often is, absolutely swamped by other factors. (That’s a true and potentially distressing part of reality.)

It’s not inevitable that one must be (or remain) “acutely aware of the pressing problems you can’t solve” or that that awareness must be “distressing” (to the degree one thinks it is true). But this has been a big part of my own path – not just what to do about distressing things, or about the distress itself, but how to think about it all.

But that’s all part of the path, and it’s all been discussed too, at times at considerable length.

But it’s also true that there’s not a lot of shortcuts to reach ‘enlightenment’.

But the reason to persist – the only real reason to do anything really – is because you care; because you have something to protect.

LikeLiked by 1 person

Some pretty amazing things were possible because Isaac Newton was an autist who derived his laws of mechanics…but if you or I decided to live that life, we wouldn’t derive anything that impressive, because we’re not as smart as Newton. (Well, I know I’m not.)

LikeLike

Sure – but ‘living that life’, for some period anyways, i.e. to try it out, isn’t (necessarily) that expensive, so possibly a good thing for individuals to test for themselves.

(Unless you’re including being autistic or being born a genius in ‘living that life’.)

LikeLiked by 1 person

This is a great post and matches my own path (in a more distant orbit) pretty well.

Thanks for keeping this journal of your hike!

LikeLike

Great post! You’ve just described “The Hero’s Journey” for rationalists it seems.

Though I hope we are not falling into the trap of Effort Justification? https://en.m.wikipedia.org/wiki/Effort_justification

I do believe it’s worth it. The proof is solid, right? We’re not rationalizing, not us.

LikeLike

I can speak only for myself.

So in the very late 2000s when LessWrong and the like were coming up…I had finally decided to start dating, and therefore wasted my time reading Roissy in DC (and later Chateau Heartiste), in their earlier, pre-Nazi incarnation back when the blog was about, you know, getting laid, something of interest to men well left of Hitler. I had drifted there from Half Sigma, a quasi-neoreactionary-avant la lettre blog, and there from Sailer, who I discovered after googling ‘evolutionary conservative’.

(Basically, in the mid-2000s I had read all these books about how the right had lied to me about environmental destruction, persecution of African Americans and Native Americans…and found that I had heard it all already in my (quite liberal) high school history class. So of course I wondered…what is the left lying to me about?)

Anyway, what I figured was, “These rationalist guys are a bunch of scientists and nerds, and these people are going to be very good at putting us on Mars and curing cancer, all very laudable things for humanity as a whole. But I’m coming up on 30, and I need to get laid. Nerds, like me, are bad at that. If I read these guys, I’ll just get more of the same, and that’s not what I need at this point in time.”

“OK, some big Silicon Valley people are into polyamory, which sounds kinky and fun. But they’re rich and at the top of the group, and therefore benefit from high status, which is well known to attract women for perfectly logical evolutionary reasons, just like men are attracted to body shapes signifying fertility. So the fact that a couple of these rationalist guys are successful doesn’t change the fact that if a shlub like me got deeper into this stuff, I’d just wind up boring everyone with obscure jokes about paperclip maximization. Besides, I don’t have the computer science background to know if they’re right about this AI stuff. It doesn’t sound crazy–an out-of-control bulldozer can kill people, and if you give a machine control over everything it stands to reason it could wipe out humanity–but I’m not in a position where I could change things anyway.”

Did it work? Kind of. It could just be that I started putting myself out there and being a little more aggressive (and, well aware of my own limitations, I dialed the PUA stuff way, way back from what people there were saying).

But if you’re wondering why people don’t become rationalists…well, this is why one (IQ 145 with a math-related degree, and therefore squarely in your target demographic) person didn’t.

Again, n=1.

LikeLike

That’s too bad!

Did you read rationalist stuff too? You must have read some of it given that you’re able to reference a bunch of it.

Less Wrong was originally an effort to ‘raise the sanity water line’, specifically to be able to mitigate AI existential risk.

But that’s not the main reason I’ve stuck around (tho mostly lurking and sometimes commenting). And I’m pretty sure that’s true for a lot of other people. Rationality (and now meta-rationality) is about anything and everything and a lot of us are quite content to mostly follow-along about the AI x-risk stuff from a distance.

I don’t think you should let any specific aspect of (specific) ‘rationalists’ put you off the whole ‘movement’. There’s so many other aspects you’d probably fine insightful and even useful, especially out among the wider ‘diaspora’. And since the LW revival, I’ve found the content to be comfortably weird and all over the place.

You don’t have to drink any particular amount of our Kool-Aid; just however much, and whatever flavors, you enjoy.

LikeLike

Not really a Kool-Aid thing; more ‘these people don’t have the answer to the particular question I have’.

Though I did kind of admire Yudkowsky for getting his own sex slave. ;)

LikeLike

You have to know that this kind of statement is catnip to us!

What was your particular question?

LikeLike

I wanted to get laid. ;)

Jacob here seems to have a handle on it, but this was about a decade ago.

LikeLike

I should add that I do not support sex slavery except in a purely consensual 24/7 D/s fashion with appropriate safewords, etc.

I was more making a joke that at least one rationalist appeared to be living out a common male fantasy.

LikeLike

I’d also add that fun as it is to criticize the Blue Tribe for ignoring this back in March in their usual perfervid fear of anything that might be construed as possibly encouraging people who might potentially be lead to something approximating racism (and I’m not being totally facetious…I hate SJWs too), it’s the Red Tribe that is now holding protests against masks.

I’m seriously considering joining my local Republican Party, but the skepticism about masks and coronavirus is a big turnoff. Any political movement requires you to affirm some BS, but this is lethal BS.

LikeLiked by 1 person

P.S. You guys are probably right about A.I. risk. But there’s not much I can do about it, so…

LikeLiked by 1 person

To me, one potential obstacle might be the following. Here’s a “vibe” I get from reading a lot of rationalist content:

“You know all the scientists, academics, doctors, activists, journalists, educators, and everyone else who have spent their lives trying to understand the world and make it better? Don’t listen to them. Sure, read their papers and stuff to get background information to input into your own decision-making processes, but don’t trust them to actually give you good advice or make good decisions themselves. They’re all hopelessly biased, incompetent, innumerate, status-obsessed, and incapable of seeing the obvious. Just listen to us rationalists instead. Even if it’s something that we’ve just spent a few afternoons reading papers about, and they’ve spent their whole lives thinking about similar things, you should believe us because we have superior rationality skills.

And you’re thinking that since we have all these great insights that everyone else is ignoring, maybe it would be a good idea to publicize them so that other people can benefit and also that we get an independent check on our thinking? That’s probably a bad idea too. The mainstream is too ignorant and hidebound to understand what we’re talking about, and they would probably just make us look bad. Instead, we should stay under the radar and try to avoid mainstream attention. That does mean that this knowledge generally stays within our small group, but that’s okay because we’re going to be the ones that shape the future of the world with all the cool projects we’re working on, so we’re the ones that really need these insights.”

Now, just to clarify:

– I’m not saying that the above is a message that anyone intends to send,

– I’m not saying that the above accurately describes any one individual’s viewpoints (especially since this is sort of a composite of a lot of different posts from different people, and of course not everyone who identifies as “rationalist” believes the same things)

– I’m not saying that the statement above is (on the object level) inaccurate in all cases. (Sometimes all the experts really are biased.)

But I am saying that that is the impression I get from some rationalist writing, even if it isn’t accurate. And if you get that impression, it’s easy to see why rationalism might feel alienating (and not because of “signaling” or “low status”) You especially get this from phases like “raise the sanity waterline” – it feels like rationalists are calling the rest of the world insane.

—

More specifically, a lot of rationalist writing already assumes the reader will accept as true statements which seem strange to most. For instance, a lot of writing about “signaling” follows the following pattern:

Most people do X, and say they’re trying to achieve A.

But Y would be a much better way of achieving A.

That proves that people don’t care about A, and are just signaling.

Oftentimes part (2) is just assumed, and not a lot of effort is spent supporting it. I’m thinking in particular of a lot of Robin Hanson’s writing, where Y = “prediction markets” and A = “almost anything” – this is not likely to be convincing to anyone who doesn’t already believe in the superiority of prediction markets.

—

When I read this post I’m thinking of this in the following terms: If I were to write a book that was basically a “pitch for rationalism”, trying to convince people why rationalism was so great and why they should stick with it, I would probably do the following:

– Just as you do, talk about COVID-19 as a success story for rationalism. But of course, tell the whole story of what rationalists came up with and when; don’t just say “this is an insider story” (which obviously won’t convince anyone). (Also, I think the claim that rationalists got everything about COVID-19 right is an exaggeration. I definitely remember comments on LW suggesting that you stockpile hydroxychloroquine or figure out DIY ventilator setups; neither of these ended up being useful or necessary.)

– For things like the microcovid project, I would advise showing that to mainstream epidemiologists (who have experience building these types of models) and see what they think. If they say that it’s a good idea, then that will reinforce the point that you got things right earlier than everyone else. If they say it’s not, then you can use this to contrast rationalist thinking with mainstream epidemiology, and make your case that rationalist thinking is superior. But just saying “this is a great tool we built” without talking to others who are experts is likely to be less convincing (just like someone who claimed to discover a new theory of physics, but hasn’t talked to other physicists to see what they think, would be less convincing)

– Avoid saying things that sound like accusing others of not really caring. For instance, rather than say things like “activists who do X are just signaling their status; if you really care about the cause you should do Y instead”, say “activists who do X really care about their cause, but they could achieve even more if they do Y instead.” If it is necessary to blame others’ behavior on signaling, do so with specific evidence: e.g. “80 percent of people say they believe Z when you ask the question in public, but only 30 percent of people still believe Z when you ask them on an anonymous survey”

– Try to link things back explicitly to concepts that most people in your target audience are already aware of. For instance, I think most of them would be aware that sources have biases and that. those have to be taken into account. What rationalism adds is that in addition to well-known biases (e.g. News Source A is biased toward Political Party B; Politician C is biased toward exaggerating the benefits of his proposed policy) there are also things like “people are biased toward running the same playbook that they’ve run before, even if circumstances have changed” (that’s likely what happened to Cass Sunstein; he’s had to deal with people getting scared about overblown risks in the past) and “people are biased toward going with the herd, even if there are good reasons to think the herd is wrong”

– As you mentioned, part of the reason that people use “social cognition” or other non-explicit-reason heuristics is that they work most of the time. You could recognize that, but also point out that the times when the heuristics don’t work are the times when there’s the most value in getting it right, and talk about how to identify those times. For example:

So basically the pitch here would be that even though rationality might seem to force you to wade through a bunch of bogus-sounding ideas, it can help you find the “diamonds in the rough.”

– One framework one could use is something like:

(1) Pick a particular area that you think really affects people’s daily life, but the mainstream advice is lacking (so rationalism is necessary)

(2) Talk though how to use rationality to gain insights into that field, with at least one or two actionable conclusions.

(3 Repeat steps (1) and (2) with a variety of different fields, so the reader can see how rationality techniques apply to different fields

LikeLike

This is great criticism!

The ‘problem’ is that responding to your (valid and fairly accurate) points is (a LOT of) work.

And what would that work look like?

I imagine it would look a lot like what Andrew Gelman’s doing on his blog: fighting an uphill battle about, e.g. shoddy statistics, and bad research practices – and among professional scientists publishing in leading research journals!

And, in general, in my experience, this is a common pattern everywhere, in every subject or topic or activity: there is a frontier, epistemically and instrumentally, and it is sparsely populated.

Note that that pattern also seems fairly independent of how well those fields do overall, epistemically and instrumentally. In the sciences, the hard sciences seem relatively okay; the soft sciences seem much less so.

But this pattern’s everywhere – not just in the sciences, or academia. It can be found in politics, militaries, industries, individual businesses, and the pattern is fractal too, all the way down to the behavior of individual people.

I think it’s also a common pattern for people that (think they) are on some frontier to socialize with each other. Mostly I think of us as being a ‘weird’ (and very loosely organized) social group.

And based on their ‘revealed preferences’, (most) ‘rationalists’ aren’t much interested in building a mass movement. That seems pretty sensible when you consider that Andrew Gelman and others are struggling to enlist others in ‘movements’ of much smaller scope, and with much more selective targets. There’s a person just trying to get people to use checklists more often. What hope could we have of doing that much better than them on such a hugely general scale? (I’d rate both Gelman and Gawande as very rational people too!)

And my own experience trying to persuade others of ‘rationalist’ beliefs, or even just trying to explain them, is that it is generally hard and potentially arbitrarily difficult.

I’ll admit that ‘raise the sanity water line’ is pretty derogatory! But it’s not even in the same order-of-magnitude-ballpark as standard political nastiness (or worse), and we’re not robots (yet) – we’re going to be emotional about some of this stuff in a way that’s not understandable by outsiders of sufficient remove. (I think the idea that people are generally ‘insane’ is actually pretty widely held; but just what exactly is ‘insane’ is wildly different among different people!)

LikeLiked by 1 person

“The experts are biased, listen to us instead” is a bad argument, and one that a good rationalist wouldn’t accept on the face of it. The place to deploy it is when you’ve run the numbers and someone objects to your conclusion not because they can spot an error in the math but because “how can you disagree with all these credentialed agency experts”.

Either way, that’s an argument for trusting rationalists’ authority (and not a recruitment pitch). But rationalists themselves trust explicit models over authority 90%+ of the time, so if you’re wondering whether to believe rationalists or someone else just ask to see the numbers.

LikeLiked by 1 person

I read that post back in the day when I was only little more than just started on my journey to rationality, it sounded like an interesting thing I was obviously going to have to watch out for. Now, it looks like I haven’t at all. Instead, I’ve gotten into a political science degree, which may be the worst place from where to fall into the valley of bad rationality. And I wouldn’t have guessed — even after reading it here — that the valley would be so uncomfortable. And this article remains a great resource on the topic, even though it’s a bit old. That’s why I’m reviving this comment section to ask if you folks have tips you think I might overlook in my journey out of the hole and back on track?

LikeLiked by 1 person

When I wandered the valley of bad rationality, it was was for the two years where I completed my undergrad degree and got started as a software engineer. My biggest issue was around IQ.

While understanding IQ as innate made me vastly more empathetic to struggles others had, it also made me incredibly frustrated and fatalistic in dealing with my own goals. I mistook a lack of training and practice for a lack of intelligence and caused myself quite a bit of harm over those two years.

First, I under studied formal math and physics for the undergraduate research I was trying to do, and then felt instead that I wasn’t smart enough for the field. In actuality, one year of study would have been enough to unblock me.

Secondly, I treated practicing for software interviews as a process of repeatedly testing my intelligence, rather than as becoming good at a new skill. When I learned to treat it as a skill to improve at, I was able to become much better, and interview much more effectively.

LikeLike

Thank you! I like the story of the young woman who retreated – I know that fear, that feeling of being overwhelmed with new ideas to integrate, that temptation to return to the cave. I hope she finds her way!

LikeLike

Another possible reason for resistance to rationality is that many people want to feel like they are in control, or want to feel like they are making a choice for themselves, or dislike being told what to do.

LikeLike